Just over a year ago I wrote about my own attempts to use AI tools to generate code. My results were mixed: there were some wins, but lots of frustration. Overall it left me skeptical about whether AI-assisted development was ready for large, enterprise-quality projects. My conclusion was that LLMs were useful for small apps and tasks but not consistent and trustworthy enough to meet a professional developer’s day to day needs.

That assessment no longer holds. At this point in time, using AI tools in your development work is not optional if you want to stay competitive.

LLMs improved dramatically over the past year, across multiple dimensions simultaneously. Models became meaningfully better at generating correct code. They can now hold large codebases in context without losing the thread. Reasoning improved. And the tools built around these models matured enough to make the capabilities practically accessible rather than just theoretically impressive. The result is that AI-assisted development crossed a threshold it hadn’t before: very fast, yet accurate enough to significantly accelerate serious work.

I’ll add the caveat that LLMs are still not, and will likely never be perfect, so they still require proper oversight. But teams and individuals who are intelligently leveraging these tools are delivering more and faster, at production quality.

Genesis of this Post

I wanted to write on this topic, so I began reading up to get other perspectives. Over time I realized that many of the points I intended to raise were already well addressed in other sources. In some cases I saw that they had experienced and thought through aspects of AI usage that I had not yet encountered.

Fundamentally what I found was that the debate over usage of AIs for coding is pretty much over, and folks have moved on to considering deeper implications.

It makes more sense to point you directly at the most interesting of these sources rather than trying to rephrase their perspectives in my own writing. Thus, this post, where I share some of my sources for your consideration.

Thoughtworks Radar

I’ll start with The Thoughtworks Technology Radar, one of my favorite sources for understanding where the industry is actually heading. I wrote about it before some years back. Thoughtworks publishes it regularly, grouping technologies into four rings: Adopt, Trial, Assess, and Caution. Adopt means use it now; Caution means approach carefully or avoid. The middle two rings, Trial and Assess, reflect things that are promising but not yet settled.

What struck me about this latest volume is the sheer density of AI entries. Of 118 items I counted across all categories there are 80 that either use AI (mostly but not only LLMs) directly for development or to facilitate development of AI systems. That alone tells you something about where serious engineering teams are spending their time.

Let me pick just three examples to give you a feel for the range.

Context engineering (a step up from prompt engineering) has reached Adopt. The radar describes it as treating the AI’s context window as a design surface rather than a text box, carefully managing what the model knows at each step of a task. If you’ve used any of the current coding agents and noticed how much better they perform when you give them the right information at the right time, you’ve already experienced why this matters.

Feedback sensors for coding agents sits at Trial, meaning teams are actively experimenting with it. The idea is to wire compilers, linters, and test results directly into agentic workflows so the agent can observe when something breaks and self-correct, rather than waiting for a human to notice. It’s an early but practical step toward more autonomous development.

In the Caution category: AI-accelerated shadow IT. As AI tools make it easier for non-engineers to build functional workflows, organizations are seeing the same ungoverned, untracked systems that IT departments have fought for decades, only now they appear much faster and at greater complexity. It’s a real risk, and the radar flags it plainly.

Browse the full radar yourself. The range and volume of AI-related entries are striking.

But reading about what teams are adopting is different from reading about what it actually feels like to build something serious with these tools. That’s where Lalit Maganti comes in.

From Impossible to Feasible

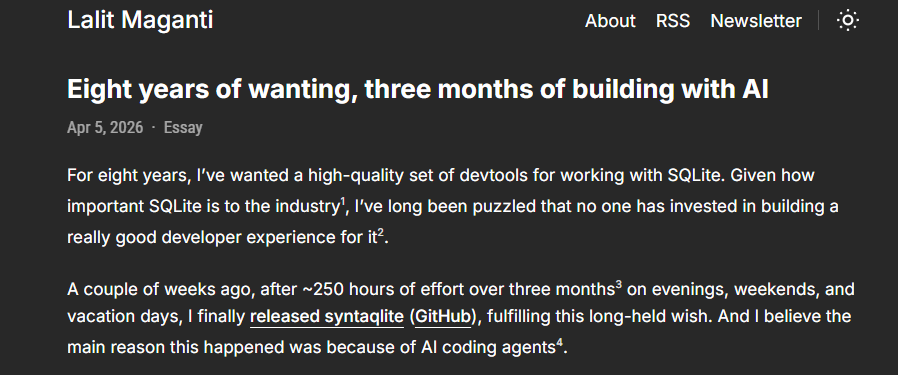

Lalit’s experiences developing with AI mirror some of what I am seeing today, and some of what I wrote about in the past. He dove much deeper than I have, building a serious open source project rather than small exploratory apps. It helped me see that what I was observing at a shallower level held up under more demanding, sustained use.

Maganti wanted to build a set of developer tools for SQLite for eight years. With AI assistance, he shipped it in three months of evenings and weekends. This goes beyond a simple acceleration of work. This is an example where an infeasible project became realistic.

His article is also valuable for its honesty as to where AI falls short. He found that AI was genuinely faster and often better than him at writing standard implementation code. Where it became actively harmful was in architecture and API design. He lost an entire month to vibe coding, building a codebase he could no longer comprehend, and had to do a full rewrite.

Thoughtworks has a name for the issue he initially hit and flags it as a Caution item in their latest radar: Codebase Cognitive Debt. As more code gets generated by AI, developers adopt solutions without building the mental models needed to understand them, and over time the system becomes harder to reason about, debug, and evolve. Lalit ran straight into this, paid for it with a month of lost work, and had to restructure his entire approach.

His closing observation is worth remembering: AI is an incredible force multiplier for implementation, but it is a dangerous substitute for design.

That balance between acceleration and the continued need for human judgment is a recurring topic in all of the articles I am sharing here. Steve Yegge’s article takes on the demands these highly productive tools place upon software engineers.

Keeping up with AIs

Steve Yegge is a well known and respected voice in the software development community. His take on AI-assisted development comes from a place of serious, sustained use. He has been building at full speed with these tools for months.

His article is less about the technology itself and more about what happens to people when they start using it seriously. His central observation is that the speed and power of AI-assisted development is genuinely addictive, and that the productivity gains are real enough to create a dangerous dynamic between developers and their employers. He calls this the AI Vampire: the tools drain human energy even as they amplify output, and companies, seeing the productivity gains, will naturally try to extract as much of that value as possible.

His value capture framing is worth thinking about. If you work at 10x productivity for the same salary, your employer captures all the gain. If you dial back your hours to match your previous output, you capture it, but your company falls behind competitors who are pushing harder. Neither extreme works. The right answer is somewhere in the middle, and nobody has figured out where that is yet.

Yegge includes himself in the problem. He is one of the early adopters setting unrealistic expectations, shipping constantly, working unsustainable hours, and inadvertently signaling to employers that this pace is normal. He is trying to dial it back.

It is a genuinely different kind of article about AI development, less about what the tools can do and more about trying to manage our role when working with them.

Armin Ronacher’s piece is shorter and more focused, but it lands on a related pressure point: not the human cost of AI speed, but the question of what still requires humans at all.

Human Accountability, Bottleneck, or Both?

Armin Ronacher’s article is short and worth reading in full. His starting observation is simple: historically, writing code was slower than reviewing it. That relationship has now flipped, and he argues we have not come close to reckoning with what that means.

His bottleneck framing is useful. When one part of a pipeline speeds up dramatically, the constraint moves downstream. He points to the industrial revolution as a precedent: speed up weaving and yarn becomes the bottleneck, speed up spinning and the raw fibre supply becomes the problem. In software right now, code generation has accelerated to the point where review, verification, and human understanding are the constraint. Some open source projects already have thousands of open pull requests, many of which will never be merged because they have fallen too far out of date before anyone could look at them.

He argues that the human is the final bottleneck not because of any technical limitation, but because accountability cannot be delegated to a machine. Someone still has to be responsible for what ships. Until that changes, humans remain in the loop by necessity rather than by choice.

The Open Source Analogy

This bottleneck dynamic is not entirely new to software development, and there is a useful precedent worth considering. Those of us who have been around long enough remember when open source was subject to a version of the 80/20 rule: back in the day, a framework, package, or module would cover 80% of what you needed, but we joked that the missing 20% would consume 80% of your time. Today we routinely depend on thousands of open source packages without thinking twice. That shift massively accelerated what individual developers and small teams could build.

The reason open source outgrew its 80/20 problem is instructive. Engagement grew exponentially, communities formed, testing practices improved, documentation was created, and package managers made dependency management tractable. The ecosystem caught up with the code.

AI-assisted development may follow a similar arc. The analogy is encouraging. But there is an important difference worth noting: a mature open source package is stable and well understood. You can read its source, check its test suite, and rely on the fact that thousands of other developers have already found its edge cases. AI-generated code is novel every time. The accountability problem Ronacher identifies does not go away just because the tooling matures. In the end, as with open source, humans still have to ensure the right tools are used in the right ways. The bottleneck moves, but it does not disappear.

What to Make of This

These four sources, taken together, paint a picture of where we are with AI-assisted development, even if none of them set out to do that together.

The most important conclusion is the one I opened with: this is not optional anymore. AI tools have crossed a threshold of speed and code quality that creates real competitive pressure. Teams and individuals using them well are delivering more, faster, and tackling projects that would simply not have been feasible before. That combination of acceleration and expanded scope is what makes this moment different from previous waves of developer tooling.

At the same time, the technology has not stabilized and the rate of change is genuinely difficult to keep up with. Thoughtworks, whose entire purpose with their Radar is assessing and categorizing technology, noted in this volume that some entries were less than a month old when they tried to evaluate them. When a publication built around careful, deliberate assessment struggles to keep pace, that tells you something about how fast things are actually moving. Keeping generally current is necessary. Keeping up with everything is probably not realistic.

The third conclusion is one every source touches on in some form: human expertise and oversight remain essential. AI is a powerful implementation engine, but design, architecture, and accountability are still human responsibilities. Lalit learned this the hard way with a full rewrite. Ronacher frames it as a structural requirement: non-sentient machines cannot carry responsibility, so humans remain in the loop by necessity. The radar’s cognitive debt warning makes the same point from a different angle: when developers stop understanding the code they are shipping, the consequences quietly accumulate.

The fourth conclusion is that humans are more of a bottleneck than ever putting more pressure on us. The sheer volume and speed of AI-generated work is creating new demands on the people who have to review, validate, and take responsibility for it. Yegge’s value capture dilemma is a concrete version of this: who actually benefits when a developer is asked to become “dramatically more productive” (potentially working even harder)? The answer is not yet clear, and the tension between AI’s output rate and human capacity for oversight has not reached any kind of equilibrium. This is not just an individual stress problem. It is a structural challenge for teams, companies, and the industry.

Coming Up

None of this happens in a vacuum, and it raises topics I want to dig into in the next post. The improvements that brought us here including better reasoning, larger context windows, agentic workflows, and raw model quality, all come with costs. Definitely direct monetary costs but also indirect costs and additional complexity.

That is where we will pick this up next time.